Dr. AI, prostate cancer, & more

Extra! Extra! AI itself reports exclusively on the future of AS for TheActiveSurveillor

By Howard Wolinsky

Artificial intelligence (AI) is an overnight sensation.

Within a week of its Nov. 30, 2022, release by OpenAI, ChatGPT became the most widely used and influential artificial intelligence (AI) chatbot in history.

(GPT is short for Generative Pre-trained Transformer, a chatbot trained on a massive collection of text data from the internet, allowing it to generate human-like text in response to a prompt.)

(Do you trust robots?)

Suddenly, ChatGPT is passing the U.S. Medical Licensing Exam. It also has passed exams for lawyers and MBA students. Sometimes barely.

Could AI become your doctor, lawyer, and accountant in a single all-rolled-up-in-one package with a one-stop service?

ChatGPT is a large language model (LLM), which generates convincing sentences by mimicking the statistical patterns of language in a huge database of text collated from the internet. It is shaking up academia, medicine, science, and everything else.

It will soon be coming to browsers near you, incorporated in search engines, such as Google and Bing, making AI part of our digital lives.

ChatGPT has had author credits on scientific articles, raising questions about who is an author and whether an AI system can truly be an author since it cannot grant consent in any way humans understand.

AI is not infallible. Its errors are referred to as “AI hallucinations,” inaccurate statements made with great confidence.

Ethicists have found the motherlode that could last through the end of the century, mulling such issues as whether Johnny and Janie should use AI to write their school papers—Isn’t the genie already out of the bottle?—are AI bots going to replace our docs? Should AI be restricted to the lab, or allowed to “live” in the real world, etc.

Check out neurologist Benjamin Tolchin, inaugural director of the Yale New Haven Health System Center for Clinical Ethics in MedPage Today: Are AI Chatbots in Healthcare Ethical?— Their use must require informed consent and independent review.”

(Note: I had my son David ask ChatCPG about the future of AS. ChatCPG toots AI’s potential while trying to allay concerns that AI will take over. Beware. Here’s the result, what do you think?)

AI on AS: Future’s bright, gotta’ wear shades

By ChatCPG

Active surveillance is an increasingly popular approach for managing low-risk prostate cancer, as it offers a way to monitor the progression of the disease without resorting to immediate treatment. The future of active surveillance looks bright, with the introduction of new technologies such as AI, which has the potential to revolutionize the way we monitor and manage prostate cancer.

One of the most exciting areas of AI in the field of active surveillance is its ability to read MRI scans and biopsy slides. By using advanced algorithms and machine learning, AI can analyze medical images and identify signs of prostate cancer, making the diagnosis process quicker, more accurate and less invasive. This can provide doctors with a better understanding of the progression of the disease and help them to make more informed decisions about treatment.

In addition to its ability to read medical images, AI can also analyze patient data and provide personalized recommendations based on a patient's individual risk factors. This can help doctors identify the patients most likely to benefit from active surveillance and those who might need more aggressive treatment.

However, it's important to note that AI is still in its early stages and more research is needed to fully understand its potential in the field of active surveillance. Nevertheless, the future looks promising and it's likely that AI will play a significant role in the management of prostate cancer in the years to come.

In conclusion, the future of active surveillance for low-risk prostate cancer is exciting, and AI has the potential to revolutionize the way we monitor and manage this disease. By using AI to read MRI scans and biopsy slides, doctors can make more informed decisions about treatment, and patients can benefit from a more personalized approach to their care.

Those of us in the prostate cancer trenches have been hearing a lot about how AI is being incorporated into reading on mpMRIs and biopsy slides.

Back in July 2021—ancient times for AI—I organized and moderated a program, “Son, I have prostate cancer. What do I do now?” featuring Dr. Niels Olson, pathologist, researcher, and chief medical officer for Defense Innovation Unit.

Jonathan Epstein, MD, the top-gun urologic pathologist from Johns Hopkins, who reads slides for 12,000 patients per year, told me recently that AI will not replace human judgment. He sees AI as a backup to human eyes and brains, not the primary driver of biopsies and autopsies.

Epstein is the best at reading pathology slides, but he is that good at reading the tea leaves for future tech?

Will AI be a screening tool for a first or second opinion? Will humans have the final word?

No doubt that all this data plus genomic and germline reports and doctor’s notes will be merged to develop overall reads and opinions.

AI has emerged as a transformative tool in radiation therapy in treatment planning for radiation therapy. AI supports radiation oncologists in their effort to localize the treatment of tumors while minimizing the effects of radiation on healthy tissue.

Robotic devices already dominate the surgical side of prostate cancer treatment. Patients put their faith in surgeons using the DaVinci robot, popular opinion virtually knocked out the model of surgeons performing open surgery with scalpel in hand.

The field is cued up to use AI in Da Vinci robotic surgery. The devices could operate on their own, just like the still-problematic autonomous cars. Check out Google and Johnson & Johnson working on new surgical robots.

Check this comment in an article on AI: −AAtlDoc Robert_W_Tucker15 days ago

You don't think we can integrate AI with a DaVinci robot to do an appy? Think again.

How will autonomous robots play in the minds of patients?

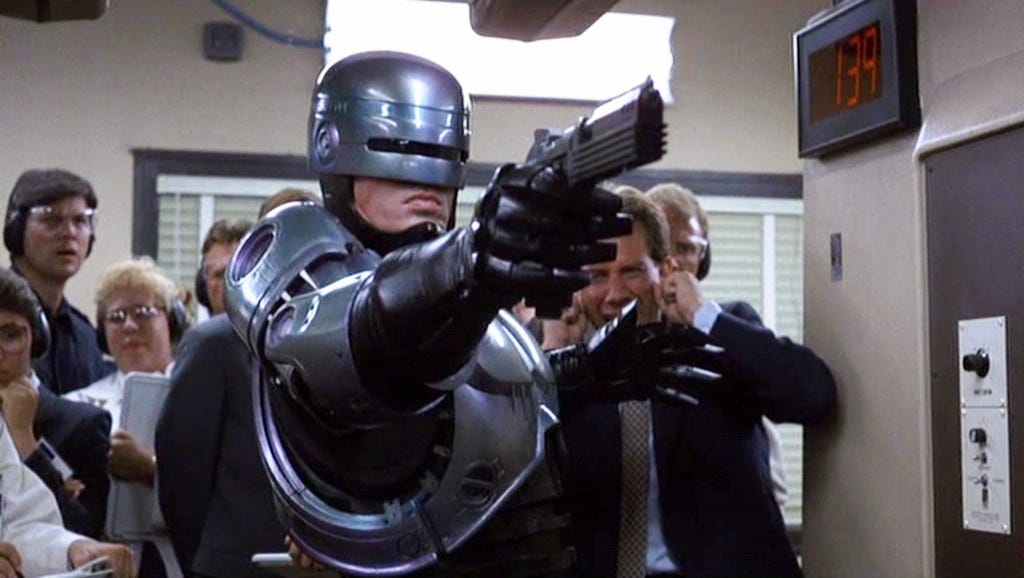

Expect some who trusted Da Vinci to have second thoughts about robots doing surgery on their own. Would you trust Robosurgeon any more than we did Robocop?

Insurers may find AI an attractive way to keep medical costs down.

But will humans revolt against AI medicine?

It’s already happened as ethicist Tolchin describes:

Koko, a free peer-to-peer therapy platform, used ChatGPT to generate therapeutic comments for users who were experiencing psychological distress. Users on the platform who wished to send supportive comments to other users could send AI-generated comments rather than formulating their own messages.

Koko's co-founder Rob Morris reported: "Messages composed by AI (and supervised by humans) were rated significantly higher than those written by humans on their own," and "Response times went down 50%, to well under a minute."

But patients freaked out when they learned AI was used to send those messages.

How would you like to see Max Hedstrom as a moderator in your prostate cancer support group? Might be fun to hear someone speaking from 20 minutes into the future when AI is moving faster than the speed of the internet, right?

The ultimate AI/robo dystopia can be seen in the Terminator movies and the Flight of the Choncords song “The Humans Are Dead.”

The Choncords captured robo destruction of civilization: “The humans are dead, The humans are dead. We used poisonous gasses. And we poisoned their asses. The humans are dead. (Yes they are dead.) The humans are dead. (I confirm they are dead.) It had to be done So that we can have fun.” Affirmative.

These are the extreme cases of course. But we need to put an unblinking eye on this rapidly spreading tech.

<sigh>

"Wow, I feel sooo groggy."

"Just rest now, sir, here take a sip of water."

"OK, so how did I do?"

"Well, there was a small problem."

"PROBLEM?! What problem?"

"The robot confused orchidectomy with prostatectomy."